AWS Elastic Load Balancing with Terraform: Best Practices for High Traffic Systems

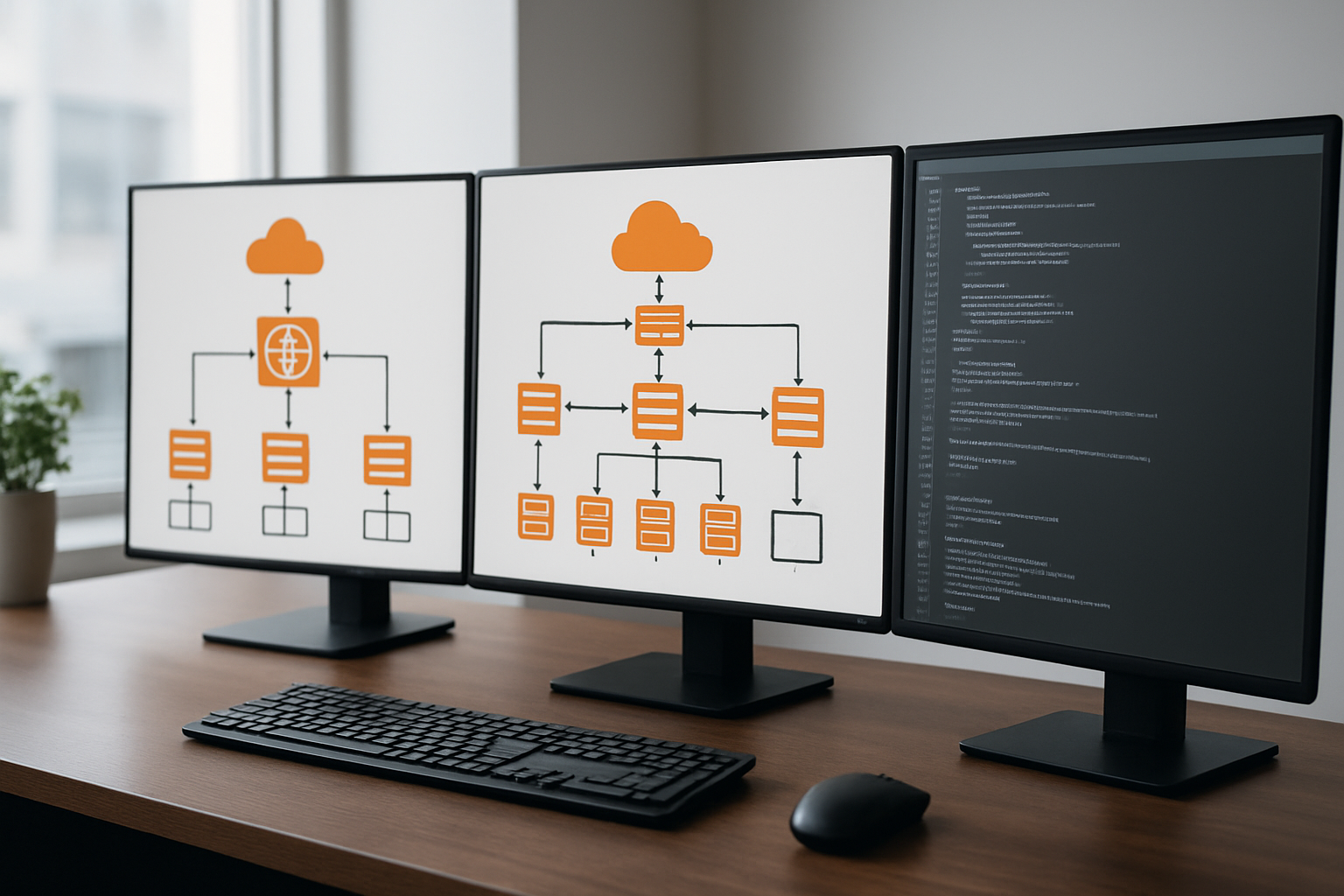

AWS Elastic Load Balancing with Terraform transforms how you manage high-traffic systems, but getting the configuration right can make or break your application’s performance. This guide targets DevOps engineers, cloud architects, and infrastructure teams who need to build rock-solid load balancer setups that won’t buckle under pressure.

When your application needs to handle thousands of requests per second, proper Terraform AWS infrastructure planning becomes critical. You can’t just spin up a basic load balancer and hope it works – you need proven strategies that scale.

We’ll walk through essential Terraform ELB configuration patterns that have been battle-tested in production environments. You’ll learn how to set up Application Load Balancers (ALB) using Terraform infrastructure as code, including the specific resource blocks and variables that prevent common mistakes.

Next, we’ll dive into high-traffic architecture design patterns that keep your systems running smoothly. This covers everything from multi-AZ deployments to connection draining strategies that maintain user sessions during deployments.

Finally, we’ll cover AWS ELB security hardening techniques you can implement through Terraform. This includes SSL/TLS configuration, security group rules, and WAF integration that protects your load balancers without slowing them down.

By the end, you’ll have the knowledge to build AWS load balancer optimization strategies that deliver both performance and reliability for your high-traffic applications.

Understanding AWS Elastic Load Balancing Types for Optimal Performance

Application Load Balancer Benefits for HTTP/HTTPS Traffic

Application Load Balancers excel at managing modern web applications with advanced routing capabilities. They operate at Layer 7, enabling content-based routing, SSL termination, and WebSocket support. ALBs integrate seamlessly with AWS services like Auto Scaling Groups and ECS, making them perfect for microservices architectures. Their ability to route requests based on hostnames, paths, and headers provides granular traffic control that Network Load Balancers can’t match.

When configuring AWS ALB Terraform resources, you get built-in health checks, sticky sessions, and HTTP/2 support out of the box. ALBs handle SSL certificate management through AWS Certificate Manager, automatically renewing certificates and reducing operational overhead. This makes them the go-to choice for high traffic load balancer scenarios requiring sophisticated request routing and modern web protocol support.

Network Load Balancer Advantages for Ultra-High Performance

Network Load Balancers deliver exceptional performance by operating at Layer 4, handling millions of requests per second with ultra-low latency. They preserve source IP addresses and support static IP allocation, making them ideal for applications requiring direct client connections. NLBs excel in TCP/UDP traffic scenarios where raw throughput matters more than application-layer intelligence.

For Terraform infrastructure as code implementations, NLBs provide predictable performance characteristics and simplified configuration. They automatically scale to handle traffic spikes without warmup periods, maintaining consistent sub-millisecond latencies even under extreme load. This makes them perfect for gaming applications, financial trading systems, and IoT workloads where every microsecond counts.

Gateway Load Balancer Use Cases for Third-Party Appliances

Gateway Load Balancers serve as transparent network gateways for deploying third-party virtual appliances at scale. They enable seamless integration of security tools, firewalls, and intrusion detection systems without disrupting existing network architectures. GLBs distribute traffic across multiple appliance instances while maintaining session stickiness and connection state.

This load balancer type simplifies deployment of network security solutions through Terraform AWS infrastructure automation. You can easily scale security appliances based on traffic patterns while ensuring high availability through health checks. Gateway Load Balancers are essential for organizations requiring centralized security inspection points or compliance with specific network security frameworks.

Classic Load Balancer Legacy Considerations

Classic Load Balancers represent the original AWS load balancing solution, now considered legacy technology. While they support both Layer 4 and Layer 7 operations, they lack the advanced features and performance optimizations of newer load balancer types. CLBs work with EC2-Classic and basic VPC configurations but offer limited routing capabilities compared to Application Load Balancers.

When maintaining existing Terraform ELB configuration with Classic Load Balancers, plan migration strategies to modern alternatives. They still function reliably for simple use cases but miss features like WebSocket support, HTTP/2, and advanced health checks. Consider them only for legacy applications that can’t easily migrate to ALB or NLB architectures.

Essential Terraform Configuration for Load Balancer Infrastructure

Provider Setup and AWS Credentials Management

Setting up your Terraform AWS provider correctly forms the backbone of reliable infrastructure deployment. Configure your provider with explicit region settings and version constraints to ensure consistent deployments across environments. Use AWS credential profiles or IAM roles rather than hardcoded keys for enhanced security. The terraform block should specify required provider versions to prevent unexpected changes during infrastructure updates.

VPC and Subnet Configuration Best Practices

Your Terraform AWS infrastructure needs properly configured VPCs spanning multiple availability zones for high availability. Create public subnets for Application Load Balancers and private subnets for backend resources. Enable DNS hostnames and resolution within your VPC configuration to support load balancer functionality. Design subnet CIDR blocks with future scaling in mind, allowing room for additional resources as your high traffic load balancer requirements grow.

Security Group Rules for Load Balancer Access

Security groups act as virtual firewalls controlling traffic flow to your load balancer infrastructure. Define ingress rules allowing HTTP (port 80) and HTTPS (port 443) from appropriate source ranges. Create separate security groups for load balancers and target instances, following the principle of least privilege. Use Terraform variables for common ports and CIDR blocks to maintain consistency across environments while enabling easy updates.

Target Group Creation and Health Check Settings

Target groups define how your Terraform ELB configuration routes traffic to backend instances. Configure health check paths that accurately reflect application readiness, avoiding expensive operations that could impact performance. Set appropriate timeout values and healthy threshold counts based on your application’s response characteristics. Use multiple target groups for blue-green deployments and A/B testing scenarios in production environments.

Load Balancer Resource Definition and Attributes

Define your AWS ALB Terraform resources with specific attributes matching your traffic patterns and requirements. Enable access logs for troubleshooting and compliance, storing them in dedicated S3 buckets. Configure deletion protection for production load balancers to prevent accidental removal. Set appropriate idle timeout values based on your application’s connection patterns, and enable cross-zone load balancing for even traffic distribution across availability zones.

High Traffic Architecture Design Patterns with Terraform

Multi-AZ Deployment Strategies for Fault Tolerance

Distributing your AWS Elastic Load Balancing infrastructure across multiple Availability Zones creates automatic failover capabilities when regional outages occur. Terraform AWS infrastructure configuration should include subnet mappings that span at least two AZs, ensuring your high traffic load balancer maintains service continuity even during zone-level failures.

Configure your Terraform ELB configuration with target groups that automatically route traffic away from unhealthy instances in affected zones. This approach provides built-in redundancy and meets enterprise-grade availability requirements for mission-critical applications handling thousands of concurrent users.

Cross-Region Load Balancing for Global Scale

AWS ALB Terraform deployments can leverage Route 53 health checks to implement intelligent DNS routing between regional load balancers. This setup allows traffic distribution based on latency, geographic location, or failover policies, creating truly global load balancer best practices for international applications.

Multi-region architectures require careful consideration of data consistency and session management. Your Terraform infrastructure as code should include cross-region VPC peering or Transit Gateway connections to enable seamless backend communication while maintaining optimal performance for users worldwide.

Auto Scaling Group Integration for Dynamic Capacity

Auto Scaling Groups work seamlessly with load balancers to automatically adjust capacity based on traffic patterns and performance metrics. Configure your AWS load balancer optimization strategy to include target tracking policies that scale instances up during traffic spikes and down during quiet periods, maintaining cost efficiency.

High availability Terraform configurations should include CloudWatch alarms that trigger scaling actions before performance degrades. Set CPU utilization thresholds around 70% for scale-out events and implement predictive scaling for applications with known traffic patterns, ensuring your infrastructure adapts to demand fluctuations automatically.

Performance Optimization Techniques for Heavy Workloads

Connection Draining Configuration for Seamless Updates

Connection draining prevents disruption during deployments by gracefully handling existing connections before removing instances from service. When you configure deregistration delay in your Terraform AWS infrastructure, active requests complete naturally while new traffic routes to healthy targets. This AWS load balancer optimization technique maintains user sessions during scaling events and application updates, preventing dropped connections that could impact user experience.

Setting the deregistration delay between 30-300 seconds through Terraform ELB configuration gives applications time to finish processing requests. Your high traffic load balancer automatically stops sending new requests to draining instances while allowing existing connections to complete gracefully, ensuring zero-downtime deployments.

Sticky Sessions Implementation for Stateful Applications

Sticky sessions bind users to specific backend instances, essential for applications that store session data locally rather than in shared storage. Your Terraform infrastructure as code can configure duration-based or application-controlled cookie stickiness to maintain user state consistency. This approach works particularly well for legacy applications that weren’t designed for distributed environments.

Configure session affinity carefully to balance user experience with load distribution. While sticky sessions solve state management challenges, they can create uneven traffic patterns across your backend instances, potentially reducing the effectiveness of your AWS ALB Terraform configuration during high-traffic periods.

SSL Termination Strategies for Reduced Backend Load

SSL termination at the load balancer level removes encryption overhead from backend servers, significantly improving application performance under heavy workloads. Your AWS Elastic Load Balancing configuration can handle SSL handshakes and certificate management centrally, freeing up compute resources on application servers for business logic processing.

Implementing SSL termination through Terraform allows you to manage certificates efficiently while maintaining security standards. This strategy reduces CPU utilization on backend instances by up to 50% for SSL-heavy workloads, enabling better resource allocation and cost optimization in your high availability Terraform setup.

Request Routing Rules for Efficient Traffic Distribution

Advanced routing rules direct traffic based on request characteristics like headers, paths, and query parameters, optimizing resource utilization across different application components. Your load balancer best practices should include path-based routing to separate API traffic from static content requests, ensuring each backend pool handles appropriate workload types efficiently.

Configure weighted routing and priority rules to implement blue-green deployments and canary releases safely. These Terraform-managed routing strategies enable sophisticated traffic management patterns that support modern deployment practices while maintaining optimal performance distribution across your infrastructure components.

Monitoring and Alerting Implementation Through Terraform

CloudWatch Metrics Configuration for Load Balancer Health

CloudWatch metrics provide real-time visibility into your AWS load balancer performance through Terraform configuration. Set up essential metrics like request count, response time, and healthy host counts using the aws_cloudwatch_metric_alarm resource. Configure detailed monitoring for Application Load Balancers to track target group health, SSL certificate status, and connection errors.

Custom Alarms Setup for Proactive Issue Detection

Deploy custom CloudWatch alarms through Terraform to catch performance issues before they impact users. Create threshold-based alerts for high latency, error rates exceeding 5%, and unhealthy target percentages. Use SNS topics to route notifications to operations teams and integrate with PagerDuty for after-hours escalation.

- High CPU utilization alarms (>80% threshold)

- Target response time monitoring (>500ms alerts)

- HTTP 5xx error rate tracking

- Connection timeout notifications

Access Logs Configuration for Traffic Analysis

Enable detailed access logs for your load balancers using Terraform’s access_logs configuration block. Store logs in S3 buckets with proper lifecycle policies to manage costs while maintaining compliance requirements. Configure log parsing tools like CloudWatch Insights or third-party solutions to analyze traffic patterns, identify bottlenecks, and optimize resource allocation based on actual usage data.

Security Hardening and Compliance Best Practices

WAF Integration for Application Layer Protection

Implementing AWS WAF with your Terraform AWS infrastructure adds crucial application-layer security to your load balancer setup. Configure WAF rules to block SQL injection attacks, cross-site scripting, and suspicious traffic patterns that could overwhelm your high traffic load balancer. Use Terraform to define custom rule groups and rate limiting policies that automatically scale with your infrastructure.

SSL/TLS Certificate Management with ACM

AWS Certificate Manager streamlines SSL certificate provisioning for your AWS ALB Terraform configuration. Set up automatic certificate renewal and multi-domain support through Terraform resource blocks, ensuring encrypted connections remain uninterrupted. Your load balancer best practices should include certificate validation automation and proper listener configuration for both HTTP and HTTPS traffic routing.

Network Security Group Rules for Restricted Access

Security groups act as virtual firewalls controlling inbound and outbound traffic to your load balancer infrastructure. Define restrictive rules allowing only necessary ports and protocols, typically port 443 for HTTPS and port 80 for HTTP redirects. Configure separate security groups for different application tiers to maintain network segmentation and minimize attack surface exposure.

VPC Flow Logs Configuration for Traffic Monitoring

VPC Flow Logs provide detailed network traffic analysis for your AWS ELB security hardening strategy. Enable flow logs at subnet and VPC levels to capture packet metadata, source destinations, and protocol information. Store logs in CloudWatch or S3 for long-term analysis and compliance reporting, helping identify unusual traffic patterns and potential security threats.

Cost Optimization Strategies for Production Environments

Right-Sizing Load Balancer Capacity for Actual Demand

AWS Elastic Load Balancing costs scale with traffic volume and connection patterns. Application Load Balancers charge based on Load Balancer Capacity Units (LCUs), which measure new connections, active connections, bandwidth, and rule evaluations. Monitor CloudWatch metrics to identify peak usage patterns and configure auto-scaling triggers in Terraform to match capacity with demand. Implement intelligent traffic distribution using weighted routing and health checks to prevent over-provisioning during low-traffic periods.

Reserved Instance Planning for Predictable Workloads

Network Load Balancers benefit from reserved capacity pricing when traffic patterns remain consistent. Analyze historical load patterns using AWS Cost Explorer to identify stable baseline requirements. Configure Terraform AWS infrastructure with predictable capacity commitments for production environments handling steady traffic volumes. Reserved pricing models reduce costs by up to 30% for workloads with consistent demand patterns over one to three-year terms.

Resource Tagging for Accurate Cost Allocation

Implement comprehensive resource tagging strategies through Terraform infrastructure as code to track load balancer expenses across departments, projects, and environments. Configure automated tagging policies for AWS ALB Terraform deployments using variables for cost center identification, environment classification, and application ownership. Proper tagging enables detailed cost analysis, budget allocation accuracy, and chargeback mechanisms for multi-tenant architectures supporting high traffic load balancer configurations.

Building a robust load balancing solution with Terraform gives you the power to handle massive traffic while keeping your AWS infrastructure reliable and cost-effective. We’ve covered the different types of AWS load balancers, essential Terraform configurations, and proven architecture patterns that can scale with your growing demands. The monitoring, security, and optimization techniques we discussed will help you maintain peak performance even during traffic spikes.

Start implementing these practices in your own environment by choosing the right load balancer type for your specific needs and gradually building out your Terraform configurations. Focus on getting your monitoring and alerting set up first – you’ll thank yourself later when you can spot issues before they impact your users. Remember that great infrastructure isn’t built overnight, so take it step by step and test everything thoroughly in a staging environment before rolling it out to production.

The post AWS Elastic Load Balancing with Terraform: Best Practices for High Traffic Systems first appeared on Business Compass LLC.

from Business Compass LLC https://ift.tt/Pnx0G3J

via IFTTT

Comments

Post a Comment